Case study

Electronic Health Records

Stanford University’s Emergency Medicine Department from the School of Medicine

Learn More

Stanford University’s Emergency Medicine Department from the School of Medicine leads the advancement of emergency medicine through innovation and scientific discovery. The department benefits from collaboration with other disciplines at Stanford, within local Silicon Valley, and across the globe.

The goal

The client wanted to create predictive models capable of using a patient’s Electronic Health Records (EHR) to anticipate the reason and timing of the patient’s next visit to the emergency room. Specifically, we were focused on surmising visits caused by domestic violence.

The Data

The data consisted of over 300 million patient visits to the emergency room from different hospitals in the US. As it is extremely sensitive data, it was anonymized, deleting all patient identificatory traits and keeping only relevant demographic information.

Our Solution

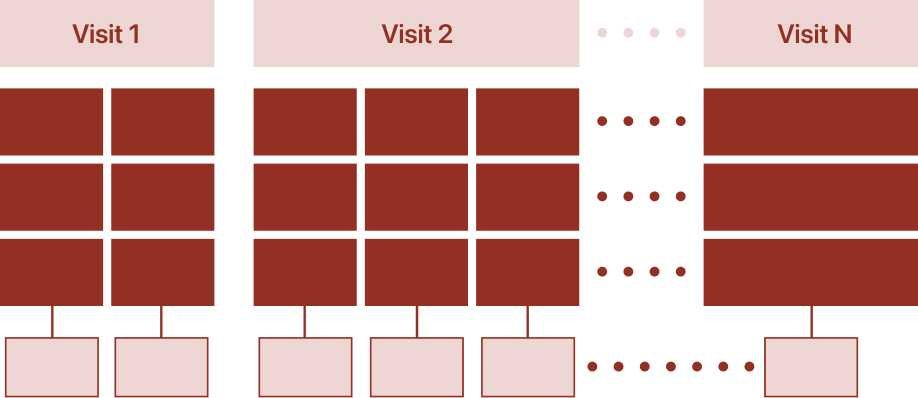

We worked with different transformer architectures, using EHR data as input to predict the next patient’s visit. Our work consisted in pre-training with a large dataset and fine-tuning with the domestic violence task. Having a pre-trained model, we were capable of fine-tuning with less amounts of data for new tasks (that can include respiratory problems, heart diseases or else). This scalability at a minimum cost is one of the main benefits of the approach we selected.

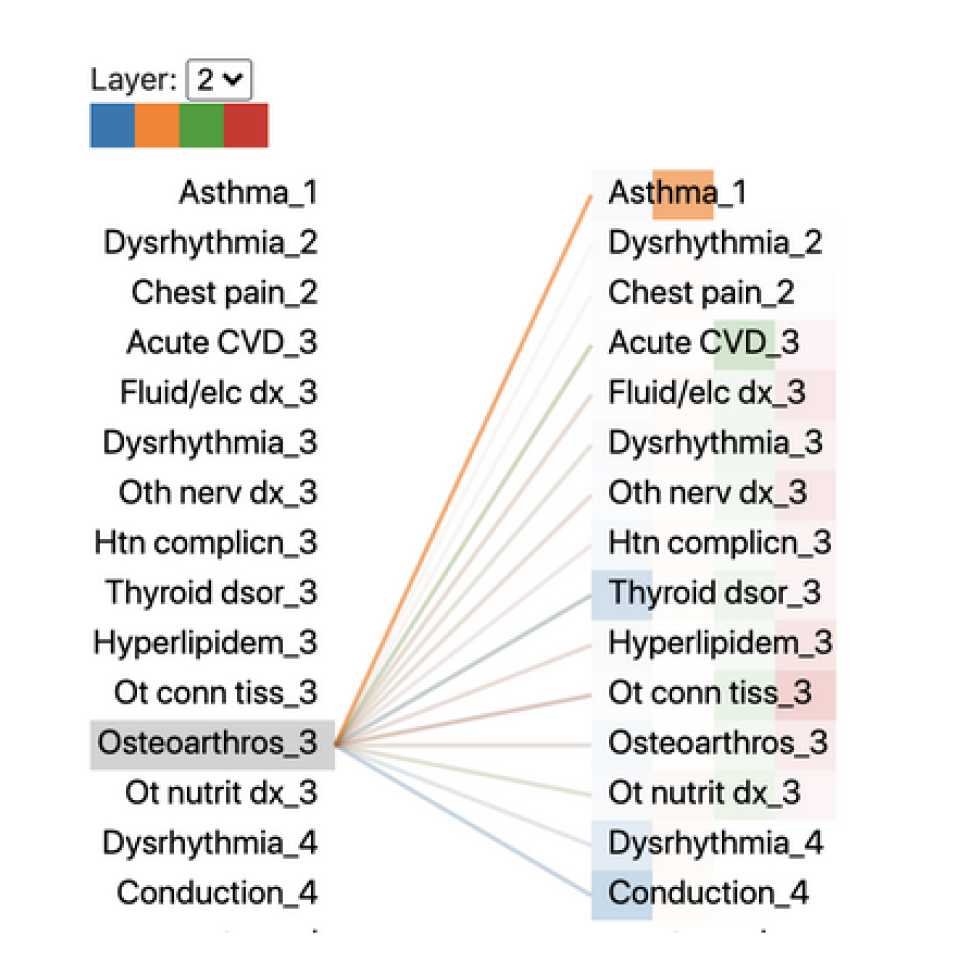

Additionally, we worked with attention layers visualizations to add explainability to our predictions. This means that we not only predict why a patient could return but also analyze what in the patient’s history helps us come to that conclusion.

Technology

Transformers are popular architectures since they do not have the same limitations as LSTM when dealing with long term dependencies. They are very popular in natural language processing problems, being Google’s BERT one of the most notorious implementations.

We modified existing transformers, pre-trained and fine tuned our own with our dataset, and we managed to achieve state of the art results.

Results

Do you want to know more? Contact us.

Get in touch with one of our specialists.

Let's discover how we can help you

Training, developing and delivering machine learning models into production

Contact us

Got a project?

Let's talk

About us

Industries

Expertise

Services